Text Generation

Transformers

PyTorch

English

llama

text-generation-inference

unsloth

trl

sft

conversational

Instructions to use appvoid/arco-2-reasoning-20k with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use appvoid/arco-2-reasoning-20k with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("text-generation", model="appvoid/arco-2-reasoning-20k") messages = [ {"role": "user", "content": "Who are you?"}, ] pipe(messages)# Load model directly from transformers import AutoTokenizer, AutoModelForCausalLM tokenizer = AutoTokenizer.from_pretrained("appvoid/arco-2-reasoning-20k") model = AutoModelForCausalLM.from_pretrained("appvoid/arco-2-reasoning-20k") messages = [ {"role": "user", "content": "Who are you?"}, ] inputs = tokenizer.apply_chat_template( messages, add_generation_prompt=True, tokenize=True, return_dict=True, return_tensors="pt", ).to(model.device) outputs = model.generate(**inputs, max_new_tokens=40) print(tokenizer.decode(outputs[0][inputs["input_ids"].shape[-1]:])) - Notebooks

- Google Colab

- Kaggle

- Local Apps

- vLLM

How to use appvoid/arco-2-reasoning-20k with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "appvoid/arco-2-reasoning-20k" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "appvoid/arco-2-reasoning-20k", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker

docker model run hf.co/appvoid/arco-2-reasoning-20k

- SGLang

How to use appvoid/arco-2-reasoning-20k with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "appvoid/arco-2-reasoning-20k" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "appvoid/arco-2-reasoning-20k", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "appvoid/arco-2-reasoning-20k" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "appvoid/arco-2-reasoning-20k", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }' - Unsloth Studio new

How to use appvoid/arco-2-reasoning-20k with Unsloth Studio:

Install Unsloth Studio (macOS, Linux, WSL)

curl -fsSL https://unsloth.ai/install.sh | sh # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for appvoid/arco-2-reasoning-20k to start chatting

Install Unsloth Studio (Windows)

irm https://unsloth.ai/install.ps1 | iex # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for appvoid/arco-2-reasoning-20k to start chatting

Using HuggingFace Spaces for Unsloth

# No setup required # Open https://huggingface.co/spaces/unsloth/studio in your browser # Search for appvoid/arco-2-reasoning-20k to start chatting

Load model with FastModel

pip install unsloth from unsloth import FastModel model, tokenizer = FastModel.from_pretrained( model_name="appvoid/arco-2-reasoning-20k", max_seq_length=2048, ) - Docker Model Runner

How to use appvoid/arco-2-reasoning-20k with Docker Model Runner:

docker model run hf.co/appvoid/arco-2-reasoning-20k

Uploaded model

- Developed by: appvoid

- License: apache-2.0

- Finetuned from model : appvoid/arco-2

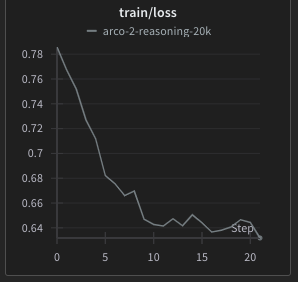

fine-tuned on 20k samples from KingNish reasoning dataset

format is like the template in the dataset:

<|im_start|>user

What is javascript?

<|im_end|>

<|im_start|>reasoning

I can answer directly to this question.

<|im_end|>

<|im_start|>assistant

That's a great question! JavaScript is a programming language that is commonly used to create interactive web pages and applications. It's known for its ability to create dynamic, interactive content and it's used by many web developers to create web pages, mobile applications, and more.

- Downloads last month

- -